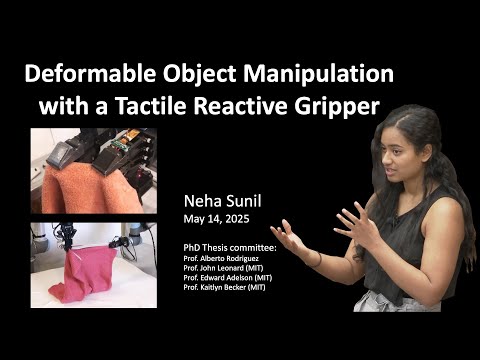

This research presents a modular visuotactile robotic system for manipulating deformable objects such as cables, towels, and garments. Unlike rigid-object manipulation, deformables pose challenges due to occlusion, complex dynamics, and high variability. The system combines vision for global context and tactile sensing (GelSight) for precise local control, enabling tasks like cable tracing, cloth edge following, towel folding, and garment handling. It uses reactive control, learned dynamics (LQR), affordance models, and dense correspondence to generalise across tasks and objects. A key innovation is shifting from global state estimation to local, feedback-driven manipulation, improving robustness, efficiency, and real-world applicability in domains like manufacturing, healthcare, and assistive robotics.

2025

This research uses cavefish to reveal how evolution reshapes the brain. By comparing surface and cave-adapted forms, it shows that neural circuits lost to vision are repurposed for touch and smell. These findings demonstrate how evolution refines existing brain structures to meet environmental demands.

This thesis introduces Armando, a low-cost soft robotic gripper with proprioceptive sensing using a single flexible capacitive sensor and neural-network decoding. Achieving 99% accuracy, Armando enables precise finger-position estimation for applications in prosthetics, assistive care, and disaster response, advancing accessible tactile robotics inspired by human touch.