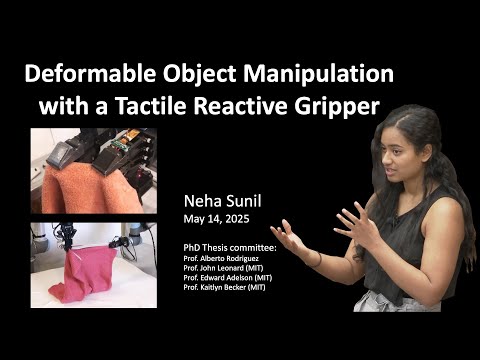

This research presents a modular visuotactile robotic system for manipulating deformable objects such as cables, towels, and garments. Unlike rigid-object manipulation, deformables pose challenges due to occlusion, complex dynamics, and high variability. The system combines vision for global context and tactile sensing (GelSight) for precise local control, enabling tasks like cable tracing, cloth edge following, towel folding, and garment handling. It uses reactive control, learned dynamics (LQR), affordance models, and dense correspondence to generalise across tasks and objects. A key innovation is shifting from global state estimation to local, feedback-driven manipulation, improving robustness, efficiency, and real-world applicability in domains like manufacturing, healthcare, and assistive robotics.

2025

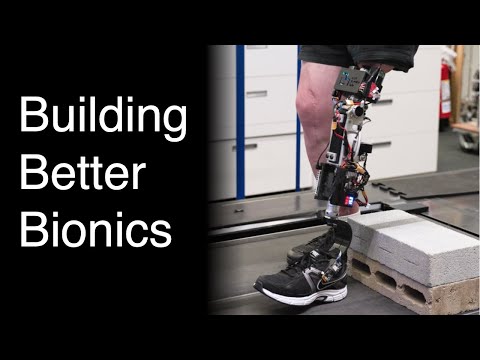

This defense argues prostheses must exchange information, not just energy, with the nervous system. It combines soft-tissue AMI revision, osseointegration with implanted electrodes (OMP), and impedance control to enable continuous powered-knee modulation. Pilot studies show improved neuromechanics, cortical activation, cleaner EMG, better task performance, and greater embodiment versus socket-based approaches.