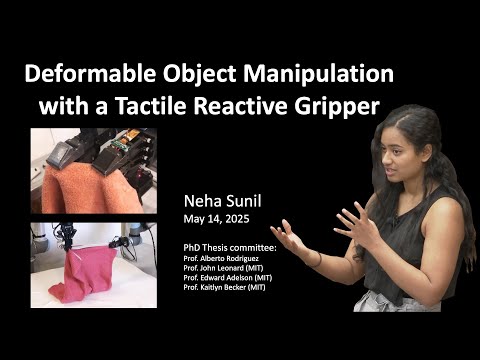

This research presents a modular visuotactile robotic system for manipulating deformable objects such as cables, towels, and garments. Unlike rigid-object manipulation, deformables pose challenges due to occlusion, complex dynamics, and high variability. The system combines vision for global context and tactile sensing (GelSight) for precise local control, enabling tasks like cable tracing, cloth edge following, towel folding, and garment handling. It uses reactive control, learned dynamics (LQR), affordance models, and dense correspondence to generalise across tasks and objects. A key innovation is shifting from global state estimation to local, feedback-driven manipulation, improving robustness, efficiency, and real-world applicability in domains like manufacturing, healthcare, and assistive robotics.

2025

2025

This thesis developed a real-time system for detecting, classifying, and locating sound events using only audio data. A network of 16 microphones and deep learning techniques achieved 96% classification accuracy and average localization error of 1.4 meters, demonstrating that sound-based analysis can effectively replace vision in monitoring applications.